Blog Archives

Day 98: More CI Writing

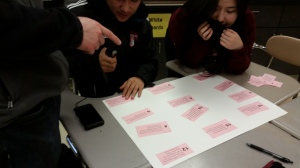

So, yesterday, most of the groups got the statements sorted. Today they will create a poster of the incorrect statements and write underneath each one what the error is. I found a great explanation by Bock about the types of interpretation errors with examples and explanations. My students used this document to help guide them. And they eventually were able to identify correct and incorrect statments, but also were able to articulate what made interpretations incorrect. YAY!

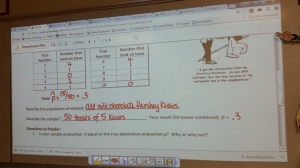

Day 95: MC Mondays

Late last year, via Twitter, a discussion revolved around how to give AP Stats students more and regular experience with multiple choice questions. One idea put forth by @druink was to have an MC Monday experience. She shared a form she created and asked for input and collaboration around the viability of the form: number of questions, student reflection, etc.

I wanted to use the form but tweak it some more to allow me to track both general student progress as well as progress in the four strands of the AP Stats curriculum: Exploring data, Generating data, Probability and Inference methods. Part of this is to complete my teacher evaluation student growth component, but also to help me see if there are areas that need revisiting later in the course (of course, there always are, but in the past it was hit or miss rather than data driven).

One hindrance for tracking via components was how to identify each question easily so it didn’t become a time-suck. In addition, I needed a reasonably easy way to generate questions that were essentially at the AP level. So my department purchased a new ExamView test generator for our text which already had the questions identified by the AP standards. I also found that GradeCam can assign a learning target to questions. Unfortunately, the available targets were CCSS or state standards; luckily, in the CCSS there are “almost matching” statements around the 4 big strands, so I could label questions. Because I have been using GradeCam for unit tests as well as Midterms and Finals, students are very comfortable with the process of scanning their answers for me.

So today was the first day of trying out the process and I think it went great! Now I’ll be able to track long-term retention of the key ideas as well as monitor individual students’ growth (or lack there of).

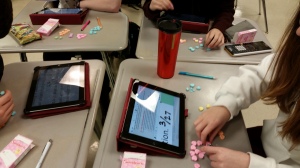

Day 93: Be Mine Statistics

I’m a sucker for all things Valentine and hearts. So why wouldn’t I have an AP Stats activity using Sweetheart conversation hearts? This year, my students endulge me better than most, at least feinting enthusiasm for these corny activities.

This activity was all about the proportion of pink hearts. The big question was, “What is the true proportion of pink Sweetheart conversation hearts?” They had to count the number of pink hearts and then determine a sample proportion for their box…we had to discuss our willingness to accept each box as a random sample from the population of all Sweetheart conversation hearts.

From there, they began their introduction to inference methods via the confidence interval. Using their sample value, they explored the possible shape of the sampling distribution, how it related to their previous understanding of sampling distributions, what conditions might need to be in place, etc.

The next step is to work on interpreting the confidence interval as well as the confidence level…their first foray into technical writing!

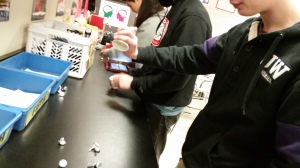

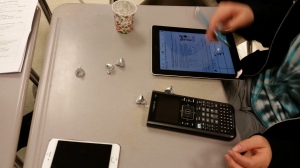

Day 90: Gimme A Kiss

We had lots of Hershey Kisses left over from Tolo, so I decided to re-write the intro activity to confidence intervals to revolve around tossing Hershey Kisses. It is based on an activity I found on the internet by Lisa Brock and Carol Sikes, who found the activity on the website for Aaron Rendahl’s STAT 4102 activities at University of Minnesota. I revised it some more…don’t we all tweak things to make it our own?